How does Kafka Producer work

Michael Henderson

Published Apr 01, 2026

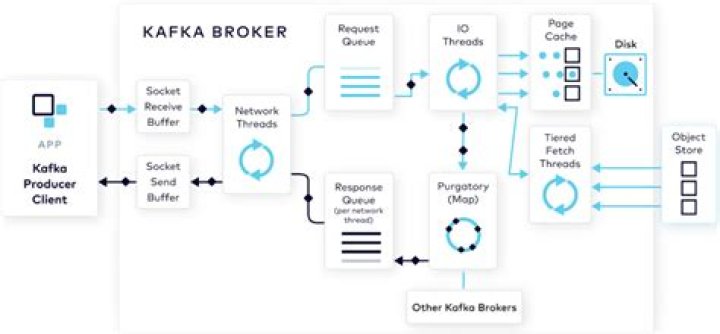

A producer partitioner maps each message to a topic partition, and the producer sends a produce request to the leader of that partition. The partitioners shipped with Kafka guarantee that all messages with the same non-empty key will be sent to the same partition.

What does producer do in Kafka?

Kafka Producers. A producer is the one which publishes or writes data to the topics within different partitions. Producers automatically know that, what data should be written to which partition and broker. The user does not require to specify the broker and the partition.

How does Kafka Idempotent Producer work?

The idempotent producer solves the problem of duplicate messages and provides the ‘exactly-once-delivery’. For a particular session, the Kafka leader broker assigns a producer id(PID) to each producer. Also, the producer assigns a monotonically increasing sequence number (SqNo) to each message before sending it.

How does Kafka producer and consumer work?

A producer is an entity/application that publishes data to a Kafka cluster, which is made up of brokers. A broker is responsible for receiving and storing the data when a producer publishes. A consumer then consumes data from a broker at a specified offset, i.e. position.How are producer transactions implemented in Kafka?

When the producer is about to send data to a partition for the first time in a transaction, the partition is registered with the coordinator first. When the application calls commitTransaction or abortTransaction, a request is sent to the coordinator to begin the two phase commit protocol.

What happens when Kafka broker goes down?

During a broker outage, all partition replicas on the broker become unavailable, so the affected partitions’ availability is determined by the existence and status of their other replicas. If a partition has no additional replicas, the partition becomes unavailable.

How a producer produces data to a topic provided to the Kafka client?

The Kafka producer created connects to the cluster which is running on localhost and listening on port 9092. The producer posts the messages to the topic, “sampleTopic”. When you run the above shell script, a console appears. We can start sending the messages to the Kafka cluster from the console.

Why Kafka is so fast?

Compression & Batching of Data: Kafka batches the data into chunks which helps in reducing the network calls and converting most of the random writes to sequential ones. It’s more efficient to compress a batch of data as compared to compressing individual messages.Can a producer be a consumer in Kafka?

1 Answer. Absolutely. There is no reason why you should not be able to instantiate a consumer and a producer in the same client.

How does Kafka work under the hood?Kafka is built using zookeeper. … Kafka uses zookeeper for coordination of brokers and also stores metadata of topics, partitions etc. The internal working of zookeeper is out of this blog’s scope. Since kafka is dependent on zookeeper, before starting the kafka cluster, we ensure that zookeeper is up and running.

Article first time published onHow does Kafka ensure exactly once?

A batch of data is consumed by a Kafka consumer from one cluster (called “source”) then immediately produced to another cluster (called “target”) by Kafka producer. To ensure “Exactly-once” delivery, the producer creates a new transaction through a “coordinator” each time it receives a batch of data from the consumer.

Do we need zookeeper for running Kafka?

Yes, Zookeeper is must by design for Kafka. Because Zookeeper has the responsibility a kind of managing Kafka cluster. It has list of all Kafka brokers with it. It notifies Kafka, if any broker goes down, or partition goes down or new broker is up or partition is up.

What is enable auto commit in Kafka?

Auto commit is enabled out of the box and by default commits every five seconds. For a simple data transformation service, “processed” means, simply, that a message has come in and been transformed and then produced back to Kafka.

What is min ISR?

The ISR is simply all the replicas of a partition that are “in-sync” with the leader. … At a minimum the, ISR will consist of the leader replica and any additional follower replicas that are also considered in-sync.

What is Kafka producer API?

The Kafka Producer API allows applications to send streams of data to the Kafka cluster. The Kafka Consumer API allows applications to read streams of data from the cluster.

Does Kafka support transaction?

Indeed, Kafka supports transactions, but it is only transactions between individual topics. We can atomically receive an incoming message, process it, send some outgoing messages, and reliably acknowledge at the same time the receipt of the original message.

How do you make a Kafka producer?

- Provision your Kafka cluster. …

- Initialize the project. …

- Write the cluster information into a local file. …

- Download and setup the Confluent CLI. …

- Create a topic. …

- Configure the project. …

- Add application and producer properties. …

- Update the properties file with Confluent Cloud information.

How do you start a producer in Kafka?

- First, start a local instance of the zookeeper server ./bin/zookeeper-server-start.sh config/zookeeper.properties.

- Next, start a kafka broker ./bin/kafka-server-start.sh config/server.properties.

- Now, create the producer with all configuration defaults and use zookeeper based broker discovery.

How do you send data to Kafka producer?

- There are following steps used to launch a producer:

- Step1: Start the zookeeper as well as the kafka server.

- Step2: Type the command: ‘kafka-console-producer’ on the command line. …

- Step3: After knowing all the requirements, try to produce a message to a topic using the command:

What is replicas in Kafka?

Replication in Kafka happens at the partition granularity where the partition’s write-ahead log is replicated in order to n servers. Out of the n replicas, one replica is designated as the leader while others are followers. … A message is committed only after it has been successfully copied to all the in-sync replicas.

What is min insync replicas?

min. insync. replicas is a config on the broker that denotes the minimum number of in-sync replicas required to exist for a broker to allow acks=all requests. That is, all requests with acks=all won’t be processed and receive an error response if the number of in-sync replicas is below the configured minimum amount.

What happens if ZooKeeper goes down in Kafka?

For example, if you lost the Kafka data in ZooKeeper, the mapping of replicas to Brokers and topic configurations would be lost as well, making your Kafka cluster no longer functional and potentially resulting in total data loss. …

How do I run a Kafka producer in eclipse?

- Download the zip into Desktop (to local system)

- Extract the downloaded zip.

- Go to eclipse IDE -> File -> Import -> Gradle (STS) project -> Next -> Brouse the extracted Kafka directory from you local disk -> click ‘Build Model’ -> next -> Finish.

What is Kafka consumer and producer and broker?

A Kafka cluster consists of one or more servers (Kafka brokers) running Kafka. Producers are processes that push records into Kafka topics within the broker. A consumer pulls records off a Kafka topic.

Can a producer also be a consumer?

A consumer is someone who pays for goods and services. (Sources B and C) • You can be both a producer and a consumer.

What is zero copy Kafka?

“Zero-copy” describes computer operations in which the CPU does not perform the task of copying data from one memory area to another. This is frequently used to save CPU cycles and memory bandwidth when transmitting a file over a network.[1]

What is latency in Kafka?

Latency: Kafka provides the lowest latency at higher throughputs, while also providing strong durability and high availability. Kafka in its default configuration is faster than Pulsar in all latency benchmarks, and it is faster up to p99. 9 when set to fsync on every message.

Does Kafka use RAM?

Kafka avoids Random Access Memory, it achieves low latency message delivery through Sequential I/O and Zero Copy Principle. Sequential I/O: Kafka relies heavily on the filesystem for storing and caching messages.

How does Kafka work in a nutshell?

All Kafka messages are organized into topics. If you wish to send a message you send it to a specific topic and if you wish to read a message you read it from a specific topic. A consumer pulls messages off of a Kafka topic while producers push messages into a Kafka topic.

How consumers and producers communicate in Apache Kafka?

In Kafka, producers are applications that write messages to a topic and consumers are applications that read records from a topic. Kafka provides 2 APIs to communicate with your Kafka cluster though your code: The producer API can produce events. The consumer API can consume events.

What is broker in Kafka?

A Broker is a Kafka server that runs in a Kafka Cluster. Kafka Brokers form a cluster. The Kafka Cluster consists of many Kafka Brokers on many servers. Broker sometimes refer to more of a logical system or as Kafka as a whole.