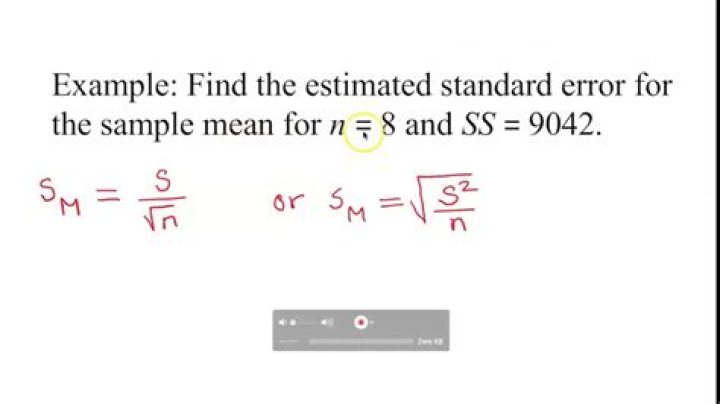

How do you calculate estimated standard error?

John Castro

Published Feb 28, 2026

How do you calculate estimated standard error?

The standard error is calculated by dividing the standard deviation by the sample size’s square root. It gives the precision of a sample mean by including the sample-to-sample variability of the sample means.

What is the estimated standard error for the sample mean difference?

The standard deviation of this set of mean values is the standard error. In lieu of taking many samples one can estimate the standard error from a single sample. This estimate is derived by dividing the standard deviation by the square root of the sample size.

What is the estimated standard error of the mean and how do we calculate it?

To calculate the standard error, you need to have two pieces of information: the standard deviation and the number of samples in the data set. The standard error is calculated by dividing the standard deviation by the square root of the number of samples.

What is a good standard error?

Thus 68% of all sample means will be within one standard error of the population mean (and 95% within two standard errors). The smaller the standard error, the less the spread and the more likely it is that any sample mean is close to the population mean. A small standard error is thus a Good Thing.

What is the difference between standard error and standard error of mean?

The standard deviation (SD) measures the amount of variability, or dispersion, from the individual data values to the mean, while the standard error of the mean (SEM) measures how far the sample mean (average) of the data is likely to be from the true population mean. The SEM is always smaller than the SD.

How do you calculate SE from SD?

How to calculate the standard error in Excel. The standard error (SE), or standard error of the mean (SEM), is a value that corresponds to the standard deviation of a sampling distribution, relative to the mean value. The formula for the SE is the SD divided by the square root of the number of values n the data set (n) …

Is standard error the same as standard error of the mean?

No. Standard Error is the standard deviation of the sampling distribution of a statistic. Confusingly, the estimate of this quantity is frequently also called “standard error”. The [sample] mean is a statistic and therefore its standard error is called the Standard Error of the Mean (SEM).

How much standard error is acceptable?

A value of 0.8-0.9 is seen by providers and regulators alike as an adequate demonstration of acceptable reliability for any assessment.

What is a high standard error value?

A high standard error shows that sample means are widely spread around the population mean—your sample may not closely represent your population. A low standard error shows that sample means are closely distributed around the population mean—your sample is representative of your population.

What is 90% confidence interval?

With a 90 percent confidence interval, you have a 10 percent chance of being wrong. A 99 percent confidence interval would be wider than a 95 percent confidence interval (for example, plus or minus 4.5 percent instead of 3.5 percent).

How do you calculate standard error in statistics?

Standard Error is a method of measurement or estimation of standard deviation of sampling distribution associated with an estimation method. The formula to calculate Standard Error is, Standard Error Formula: where. SEx̄ = Standard Error of the Mean. s = Standard Deviation of the Mean. n = Number of Observations of the Sample.

How to calculate standard error.?

Firstly,collect the sample variables from the population-based on a certain sampling method.

How do you calculate standard error of measurement?

Write the formula σM =σ/√N to determine the standard error of the mean. In this formula, σM stands for the standard error of the mean, the number that you are looking for, σ stands for the standard deviation of the original distribution and √N is the square of the sample size.

How do you calculate standard error of difference?

na = the size of sample A; and. nb = the size of sample B. To calculate the standard error of any particular sampling distribution of sample-mean differences, enter the mean and standard deviation (sd) of the source population, along with the values of na and nb, and then click the “Calculate” button.